Reader behavior analytics services measure how audiences discover, read, click, scroll, and return across news content. They turn article-level activity into audience patterns, topic demand signals, and retention data for UK publishers that need clearer editorial decisions and stronger engagement outcomes.

Reader behavior analytics services collect session data, engagement events, and audience paths. They show which articles attract clicks, which pages hold attention, and which topics drive repeat visits across mobile, desktop, search, social, and email.

These services track article impressions, click-through rate, dwell time, scroll depth, exit rate, and return frequency. They also group readers by source, device, geography, and topic interest. In a UK newsroom, that data shows how readers behave during morning commute peaks, evening browsing, and weekend reading.

The output is practical. Editors see which headlines earn traffic. Analysts see where readers stop. Growth teams see which channels deliver loyal visitors. The service layer converts raw traffic into audience intelligence that supports content planning, homepage layout, and newsletter strategy.

Which data points matter most?

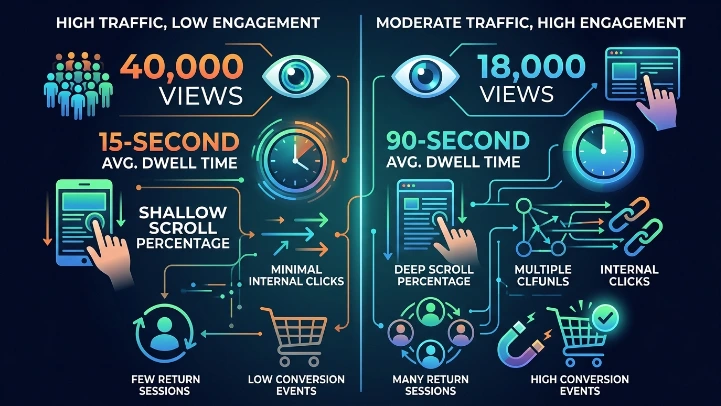

The most useful data points are page views, engaged time, scroll percentage, internal clicks, return sessions, and conversion events. A story with 40,000 views and 15-second average dwell time needs different treatment from a story with 18,000 views and 90-second dwell time.

How do these services reveal audience demand?

These services reveal demand by linking article performance to topic interest, reading depth, and return behavior. They show what readers consume first, what keeps them reading, and what content brings them back within 24 hours or 7 days.

Topic-level analysis identifies recurring interest. A politics cluster, for example, can show high return frequency and long reading time. A live sports feed can show fast clicks, short sessions, and multiple refreshes. A business explainer can show fewer visits but deeper engagement and stronger loyalty.

This level of insight matters for UK publishers because audience demand shifts fast. Search traffic changes with news cycles. Social traffic changes with headline format. Newsletter traffic changes with subject line relevance. Reader behavior analytics services connect those shifts to concrete content patterns.

What does demand look like in practice?

Demand appears in repeated topic visits, long scroll depth on explainers, and strong internal click chains. A reader who opens three inflation stories in one session shows clear economic interest.

Which service types exist in the market?

Reader behavior analytics services fall into three types: real-time services, predictive services, and virality-focused services. Real-time tools show live engagement, predictive tools forecast future demand, and virality tools identify stories that spread quickly across networks.

Real-time services track readers while the story is active. They show live concurrent users, active scrolls, and spike timing. Predictive services use historical patterns to estimate future engagement, return visits, or topic growth. Virality-focused services measure share velocity, referral bursts, and social spread.

A UK publisher uses these service types for different goals. Live news teams rely on real-time tracking. Audience teams rely on forecasting. Social teams rely on viral spread detection. The best fit depends on whether the goal is speed, prediction, or distribution.

How do service types differ?

Real-time services prioritise immediacy. Predictive services prioritise trend detection. Virality services prioritise referral momentum. Each type answers a different editorial question.

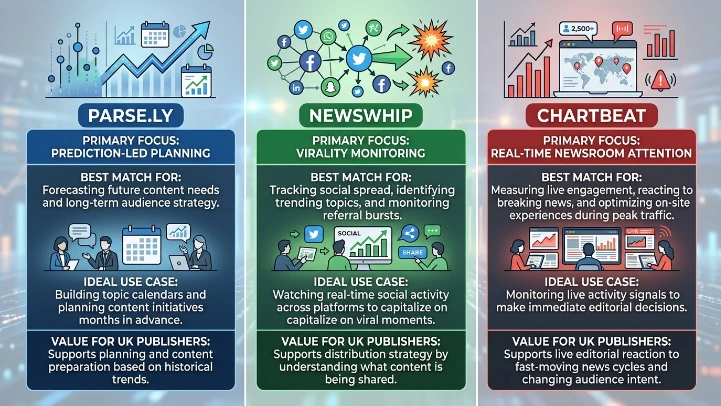

Top services compare on speed, predictive strength, and audience visibility: Parse.ly excels in predictions, NewsWhip in virality, and Chartbeat in real-time engagement. Parse.ly suits planning and topic forecasting, NewsWhip suits share detection, and Chartbeat suits live newsroom monitoring.

How to Track and AnalySe Reader Behavior

What do these services measure at article level?

These services measure article-level performance through impressions, click-through rate, dwell time, scroll depth, exit point, and internal pathway data. They show how each story performs from headline exposure to final exit.

Article-level measurement starts with the headline. If the headline earns impressions but low clicks, the packaging is weak. If clicks rise but dwell time falls, the content promise is mismatched. If dwell time and scroll depth both rise, the article matches reader intent.

Service dashboards also show section-level behavior. Readers stop at charts, quotes, or long background paragraphs. They move from one story to another through related links. That path reveals content interest that goes beyond one article. It also shows whether the newsroom structure supports deeper reading.

Why does article-level analysis matter?

Article-level analysis matters because editorial decisions happen at story level. A strong service report identifies the exact article format, headline style, and topic that performs best.

How do services segment audiences?

Reader behavior analytics services segment audiences by source, device, location, frequency, and topic affinity. Segmentation groups readers into meaningful clusters such as first-time visitors, returning readers, mobile skimmers, and high-intent topic followers.

Source segmentation shows whether readers arrive from Google, direct traffic, social platforms, or newsletters. Device segmentation separates mobile and desktop reading. Location segmentation separates UK regions such as London, Manchester, and Scotland. Frequency segmentation shows daily, weekly, and one-time readers.

Topic affinity segmentation is especially important. A reader who repeatedly opens health stories behaves differently from a reader who reads only breaking politics. A service that identifies these groups helps newsrooms assign content to audience needs with more precision.

What makes segmentation useful?

Segmentation turns one large audience into smaller, readable groups. Those groups make performance patterns easier to explain and easier to act on.

What benefits do publishers get?

Publishers get five benefits from reader behavior analytics services: clearer editorial planning, higher retention, better headline testing, stronger topic alignment, and improved revenue strategy. These benefits come from direct measurement of what readers read, skip, share, and revisit.

Clearer planning comes from knowing which subjects deliver consistent engagement. Higher retention comes from improving story structure and internal linking. Better headline testing comes from comparing click-through rates across title variants. Stronger topic alignment comes from matching coverage to repeat interest. Revenue strategy improves when audience quality, not just traffic volume, becomes visible.

UK publishers also use these services to support newsletter growth, homepage prioritisation, and subscription funnels. A reader who repeatedly visits long-form analysis has stronger value than a single-session click. The service data makes that difference visible.

What improves first?

Headline clarity improves first in many newsrooms. When editors see that click-heavy headlines lose readers early, they tighten language and improve intent match.

For foundational insights:

What Are Reader Behavior Patterns in News?

Which use cases matter most in the UK?

UK use cases include breaking-news monitoring, election coverage analysis, local news engagement, newsletter optimisation, and subscription-intent tracking. These uses reflect mobile-first reading, fast news cycles, and strong competition for attention across national and regional publishers.

Breaking-news monitoring tracks live spikes and drop-offs. Election coverage analysis tracks issue-level interest, regional response, and repeat sessions. Local news engagement tracks how readers in specific counties interact with nearby issues. Newsletter optimisation tracks which articles drive opens, clicks, and repeat site visits. Subscription-intent tracking shows which readers return often, read deeply, and engage with premium-style content.

These use cases work best when the newsroom uses one service layer across the full content cycle. The team sees early interest, mid-session behavior, and post-read return patterns in one place. That creates a complete view of audience demand rather than isolated traffic snapshots.

How do regional patterns differ?

Regional patterns differ by topic and device. London readers often show stronger morning peaks. Regional local-news readers often show higher return frequency when coverage matches community issues.

How do service results support content decisions?

Service results support content decisions by showing which stories deserve updates, which formats need restructuring, and which topics need deeper coverage. They also show where internal links, section placement, and article length affect reader retention.

An editor can use the data to decide whether a story needs a shorter introduction, a clearer subheading structure, or a different angle. A homepage editor can use it to move high-interest stories higher on the page. A strategy team can use it to build topic clusters around proven interest areas.

This is where reader behavior analytics services become decision systems. They do not only report what happened. They also show where the audience pays attention, where it loses interest, and where it returns. That makes them useful for editorial, audience, and commercial teams at the same time.

What happens after the first report?

After the first report, teams compare article patterns across 7-day and 30-day periods. That comparison shows whether a trend is temporary or stable.

What does a strong service setup include?

A strong setup includes clean event tracking, consistent definitions, topic tagging, audience segmentation, and dashboard reporting. These five parts keep the data accurate and make reader behavior easy to interpret across articles and channels.

Clean event tracking records page views, scrolls, clicks, and exits with the same rules on every page. Consistent definitions prevent confusion between engaged reads and idle open tabs. Topic tagging groups articles into usable content categories. Audience segmentation identifies repeat visitors and source groups. Dashboard reporting presents the results in a format editors can read quickly.

Without these parts, behavior data stays fragmented. With them, a newsroom sees how readers move through stories, which formats win attention, and which subjects create the strongest audience response.

Why does consistency matter?

Consistency matters because service data loses value when one team measures dwell time differently from another. Shared definitions keep reporting reliable.

Explore More Expert Insights:

Social Audience Insights Services That Amplify Your News Reach

Hire Real-Time Audience Insights Services Today for Instant Data Advantage

Which service comparison helps most?

A practical comparison starts with use case, not feature count. Parse.ly fits prediction-led planning, NewsWhip fits virality monitoring, and Chartbeat fits real-time newsroom attention. The best match depends on whether the goal is forecasting, sharing, or live engagement.

Prediction-led planning suits teams that build topic calendars and long-term audience strategy. Virality monitoring suits teams that watch social spread and referral bursts. Real-time newsroom attention suits teams that need live activity signals during breaking news and peak traffic hours.

For UK publishers, that distinction matters because speed and audience intent change fast. One service supports planning. One supports distribution. One supports live editorial reaction. The value comes from matching the service type to the newsroom objective.

How do teams choose?

Teams choose by asking one question: do they need live visibility, spread detection, or prediction? The answer determines the service category