Reader behavior analysis tracks how audiences discover, read, scroll, click, and return to news content. It uses event data, session data, and engagement metrics to explain what readers consume and where they stop. For the UK market, this topic matters because mobile reading, fast news cycles, and high source competition shape audience attention.

Reader behavior means the measurable actions a person takes while consuming news content, including impressions, clicks, dwell time, scroll depth, exits, shares, and return visits. These signals show interest, reading depth, and loyalty across devices and article types.

In news analytics, reader behavior starts at the first exposure. A reader sees a headline in search, social, homepage, or email. The next action is a click, a skip, or a save. After the click, the system records reading depth, time on page, and exit point.

The main value of these signals is clarity. A newsroom sees which topics attract attention, which formats hold attention, and which pages lose readers early. That clarity supports editorial planning, headline testing, article length decisions, and layout improvements.

Which signals matter most?

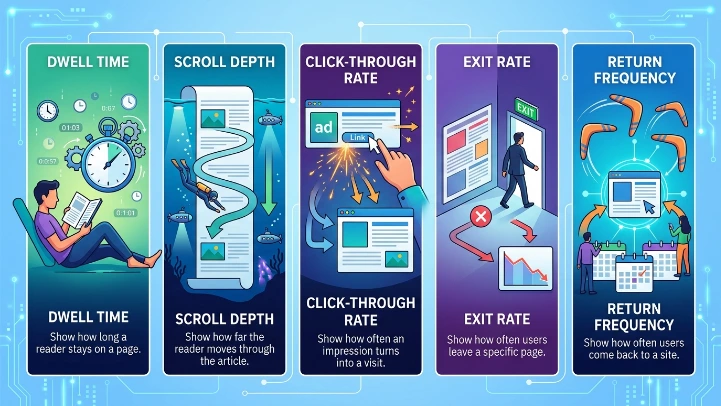

The most useful signals are dwell time, scroll depth, click-through rate, exit rate, and return frequency. Dwell time shows how long a reader stays on a page. Scroll depth shows how far the reader moves through the article. Click-through rate shows how often an impression turns into a visit.

How do you collect reader data?

Reader data comes from page tags, event tracking, server logs, and analytics dashboards. Each method records a different layer of behavior, from page load and click events to reading duration and navigation paths across devices.

Page tags place code on article pages and fire events when a reader loads content, clicks links, or reaches certain scroll points. Event tracking records actions such as opening a section, pausing on a chart, or leaving the page. Server logs capture requests and delivery patterns. Dashboards group the data into reports.

This process starts with a clear measurement plan. A newsroom defines the actions it wants to measure, then maps those actions to events. For example, a long-form investigation uses scroll milestones at 25, 50, 75, and 100 percent. A live blog uses refresh frequency and return sessions instead of long dwell time.

The data then enters a reporting layer. That layer turns raw events into session counts, article averages, and audience segments. From there, editors see patterns by topic, device, source, and time of day.

What counts as good tracking design?

Good tracking design uses consistent event names, fixed time windows, and the same metrics across article types. It also separates page views from engaged views, because a page open does not equal a full read.

What Are Reader Behavior Patterns in News?

Which metrics explain reading depth?

Reading depth comes from three core metrics: dwell time, scroll depth, and active attention signals. These metrics show whether a reader scans the first lines, reads several sections, or reaches the end of a story.

Dwell time measures how long a page stays open in a session. Scroll depth measures the percentage of article length reached. Active attention signals include tab focus, interaction pauses, and movement within the content area.

These metrics work together. A 90-second dwell time with 20 percent scroll depth signals a skim. A 90-second dwell time with 85 percent scroll depth signals deeper reading. A high dwell time alone does not prove engagement because a tab can stay open without active reading.

Newsrooms use these signals to classify content. Straight news often produces fast exits. Explainers and analysis pieces produce longer sessions. List articles and live updates often generate repeated visits and lower average scroll depth.

How do teams compare article types?

Teams compare article types by setting the same measurement rules across stories. They then review averages for politics, business, sport, culture, and breaking news.

How do you analyze audience journeys?

Audience journey analysis maps the path from discovery to exit. It connects source, landing page, internal clicks, time on section pages, and return visits to show how readers move through a news site.

The first step is source analysis. This shows whether the reader arrived from search, homepage, social media, or newsletter. The second step is landing-page analysis. This shows which article types start the session. The third step is path analysis. This shows what the reader opens next.

Journey analysis matters because news reading rarely stops at one page. A reader may enter through a breaking story, then move to an explainer, then open a profile piece. That sequence reveals topic interest and navigation quality. It also shows whether internal links support deeper site use.

A newsroom can build audience groups from these paths. One group reads only headline updates. Another group reads multiple stories on one topic. Another group returns daily from email. Each group has different content needs and different reading depth.

Why do return visits matter?

Return visits show loyalty. A reader who comes back within 24 hours behaves differently from a one-time visitor. Return patterns also reveal whether a topic creates ongoing interest.

[Insert Link to MOFU Article]

Which components belong in a reader behavior dashboard?

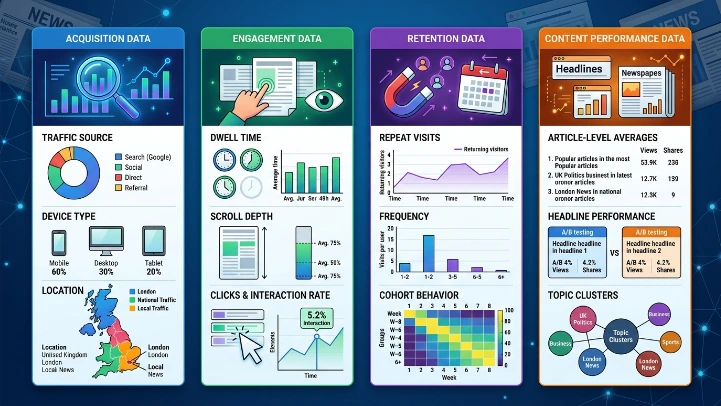

A reader behavior dashboard includes acquisition data, engagement data, retention data, and content performance data. Together, these components show where readers come from, what they read, how long they stay, and whether they return.

Acquisition data covers traffic source, device type, and location. Engagement data covers dwell time, scroll depth, clicks, and interaction rate. Retention data covers repeat visits, frequency, and cohort behavior. Content performance data covers article-level averages, headline performance, and topic clusters.

A useful dashboard keeps these groups separate. Source data explains entry points. Engagement data explains attention. Retention data explains loyalty. Content data explains what subjects work best.

For a UK newsroom, regional splits also matter. London traffic, national traffic, and local traffic behave differently. Mobile traffic also behaves differently from desktop traffic. These differences change the reading pattern and the action needed after the report.

What dashboard views help editors most?

The most useful views are article performance, topic performance, source mix, and returning audience trends. These views show both immediate interest and long-term audience value.

What benefits come from tracking reader behavior?

Tracking reader behavior improves editorial planning, headline clarity, article structure, and audience retention. It also identifies high-performing topics, weak pages, and reading patterns that support stronger distribution decisions.

Editorial teams use behavior data to refine what they publish. If readers stop after the first paragraph, the introduction needs work. If readers finish a long article, the format supports deeper coverage. If a topic attracts repeat sessions, it deserves follow-up reporting.

Behavior analysis also improves headline decisions. A headline that earns clicks but loses readers after 10 seconds creates a mismatch. A headline that attracts clicks and supports long reading creates stronger alignment between promise and content.

Retention also improves when teams understand patterns. A reader who often opens politics or business stories has a clear content preference. A newsroom can build topic pages, newsletters, or article recommendations around that preference. That process increases repeat visits and session depth.

How does analysis support newsroom planning?

Analysis supports planning by showing which topics deserve more coverage, which formats need simplification, and which publishing times produce the strongest engagement.

What use cases matter in the UK market?

UK newsrooms use reader behavior analysis for breaking-news monitoring, election coverage, local reporting, newsletter planning, and homepage design. These use cases depend on fast feedback, mobile reading, and strong topic competition across national and regional outlets.

Breaking-news monitoring tracks live traffic bursts and exit points. Election coverage tracks topic interest by constituency, issue, and timestamp. Local reporting tracks regional readership and repeat visits. Newsletter planning tracks which articles drive email opens and later site visits. Homepage design tracks which slots earn the most clicks and read-through.

These use cases share one goal. They turn raw clicks into editorial direction. A newsroom that sees strong engagement on a topic can expand coverage. A newsroom that sees weak engagement can change headline structure, article order, or placement.

The same logic applies to content formats. Short updates serve immediate news demand. Explainers serve readers who want context. Analysis pieces serve readers who want depth. Reader behavior data shows which format fits which story.

Explore More Expert Insights:

Using Social Data for Distribution Strategy

How Real-Time Data Improves News Strategy

How do topic clusters help?

Topic clusters group related stories under one theme. This structure reveals whether readers stay with a subject across multiple pages or leave after the first article.

What separates tracking from analysis?

Tracking records actions. Analysis explains them. Tracking captures the data points. Analysis turns those data points into patterns, segments, and decisions that improve content strategy and audience understanding.

This difference matters because raw data alone has limited value. A dashboard can show 50,000 page views, 3,000 exits, and 2,100 long reads. Analysis explains what those numbers mean. It identifies patterns by source, section, device, and article type.

A strong process starts with tracking rules, then moves into interpretation. The newsroom defines what counts as a read, a scroll, and a return. After that, analysts compare articles, time periods, and audiences. The result is a clear view of how readers behave, not just how many clicks a story receives.

For readers, the visible result is better content structure. For editors, the result is stronger decision-making. For the site, the result is a more accurate understanding of audience demand.