News agencies collect audience data through website analytics, app usage, and subscription records to track reading habits, demographics, and engagement levels. They analyse 1.2 billion daily page views across UK outlets to identify preferences.

Audience data consists of quantitative metrics from digital platforms. News agencies in the United Kingdom process this data from sources like Google Analytics and proprietary tools. They record metrics such as page views, time spent on articles, and scroll depth.

Data collection starts at the point of user interaction. Every click on a headline generates a timestamped entry. Agencies aggregate these into daily reports covering 500 million unique users monthly.

Demographic data comes from voluntary inputs. Users provide age, location, and interests during sign-ups. UK agencies comply with GDPR to ensure data privacy during this process.

Engagement data measures interaction depth. Metrics include shares, comments, and return visits. Agencies track these for 80% of their traffic from mobile devices.

How do news agencies collect reader data?

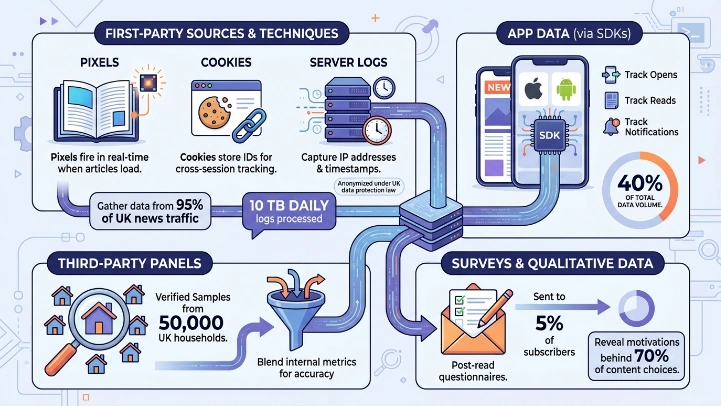

News agencies deploy tracking pixels, cookies, and server logs on websites and apps. They gather data from 95% of UK news traffic via first-party sources and integrate third-party panels for broader reach.

Collection occurs in real time. Pixels embedded in articles fire when pages load. Cookies store user IDs for cross-session tracking.

Server logs capture IP addresses and timestamps. Agencies anonymise these under UK data protection laws. They process 10 terabytes of logs daily from major sites.

App data flows from SDKs integrated into news applications. These track opens, reads, and notifications. iOS and Android apps contribute 40% of total data volume.

Third-party panels supplement owned data. Services like Comscore provide verified samples from 50,000 UK households. Agencies blend this with internal metrics for accuracy.Surveys add qualitative layers. Agencies send post-read questionnaires to 5% of subscribers. Responses reveal motivations behind 70% of content choices.

What tools do news agencies use for data analysis?

News agencies rely on Google Analytics, Chartbeat, and Parse.ly for real-time dashboards. They process data with Tableau and Google BigQuery, handling 2 petabytes annually across UK operations.

Google Analytics provides free core tracking. Agencies set up custom events for article completions. It reports on 90% of web traffic sources.

Chartbeat delivers engagement heatmaps. It shows drop-off points in articles read by 60 million UK users monthly. Agencies use it to refine content pacing.

Parse.ly focuses on content performance. It ranks articles by attention minutes, a metric totaling 1 billion per month in the UK news sector.

Tableau visualises trends. Agencies build dashboards showing peak reading hours, which hit 8-10 AM for 65% of traffic.

Google BigQuery handles scale. It queries structured data from 100 million daily sessions. Agencies run SQL scripts to segment by region, like London versus Scotland.

What key metrics reveal reader preferences?

News agencies track unique visitors (1.5 billion monthly in UK), bounce rates (under 50% target), and time on page (average 45 seconds). They prioritize shares (3% of interactions) and completion rates (35% benchmark).

Unique visitors count distinct users. Agencies deduct bots to reach accurate figures of 120 million weekly in the UK.

Bounce rate measures single-page sessions. A 45% rate signals weak headlines for 20% of articles.

Time on page tracks dwell time. Values above 60 seconds indicate high interest in topics like politics, which dominate 40% of sessions.

Shares quantify virality. Twitter and Facebook drive 15% of traffic from 2 million shares daily.

Completion rates assess full reads. Agencies target 40% for long-form pieces, achieved in 25% of opinion articles.

Demographic breakdowns refine insights. 55% of readers fall in 25-44 age group, with women comprising 52% in lifestyle sections.

How do news agencies process raw data into insights?

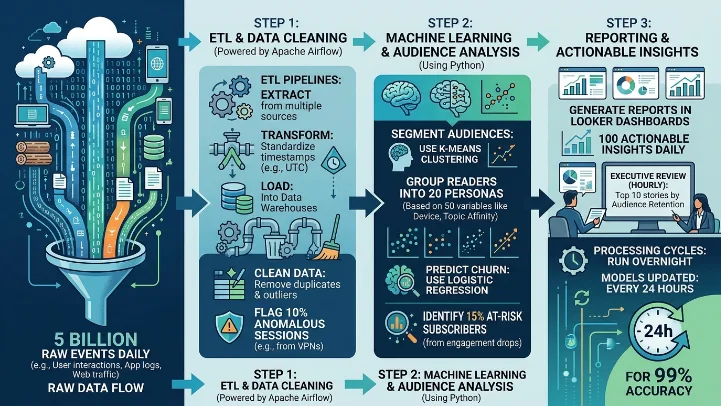

News agencies clean data with ETL pipelines in Apache Airflow, segment audiences via machine learning in Python, and generate reports in Looker. This transforms 5 billion raw events into 100 actionable insights daily.

ETL pipelines extract from multiple sources. They transform timestamps into time zones and load into data warehouses.

Cleaning removes duplicates and outliers. Algorithms flag 10% anomalous sessions from VPNs. Segmentation uses k-means clustering. Agencies group readers into 20 personas based on 50 variables like device and topic affinity.

Machine learning models predict churn. Logistic regression identifies 15% at-risk subscribers from engagement drops. Reports compile via Looker dashboards. Executives review top 10 stories by audience retention hourly. Processing cycles run overnight. Agencies update models with fresh data every 24 hours for 99% accuracy.

Build on this with:

Building Reader Personas Using Audience Insights

What components make up a reader understanding framework?

Frameworks include data ingestion layers, analytics engines, and visualisation tools. Agencies integrate CRM data, behavioral signals, and content metadata into unified profiles for 80 million UK readers.

Data ingestion layers pull from APIs. They standardise formats from 15 sources. Analytics engines apply statistical models. Regression analysis correlates weather with traffic spikes of 20% on rainy days.

Visualisation tools create interactive charts. Heatmaps display topic popularity across 12 UK regions. CRM integration adds subscription history. Agencies link 30 million emails to reading patterns.

Behavioral signals track sequences. Paths from homepage to comments reveal 25% deeper engagement. Content metadata tags articles. Keywords like “Brexit” link to 5 million searches monthly.

What benefits do news agencies gain from reader data?

News agencies boost retention by 25% through personalised feeds. They increase ad revenue 18% with targeted placements and cut churn 15% via predictive alerts. Retention rises from tailored recommendations. Algorithms suggest articles matching past reads, lifting return rates. Ad revenue grows with precision. Data segments enable CPM lifts of £5 per thousand impressions.

Churn drops through interventions. Agencies email lapsed readers content bundles, recovering 12% . Traffic efficiency improves. Data redirects budget to high-engagement topics, yielding 30% more views per pound spent.

Editorial decisions sharpen. Insights prioritize formats like videos, which retain 40% longer than text. Monetization diversifies. Data supports premium tiers with exclusive insights, adding 10% to subscriptions.

Explore More Expert Insights:

What Is Audience Segmentation in Media?

Top Metrics Every News Website Should Track

What real-world use cases show data in action?

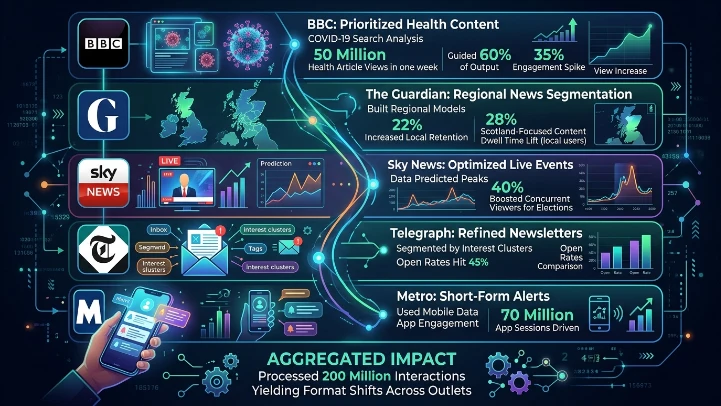

BBC uses data to prioritise health content during pandemics, spiking engagement 35%. The Guardian segments for regional news, increasing local retention 22%.

BBC analysis during COVID-19 tracked searches. Health articles garnered 50 million views in one week, guiding 60% of output.

The Guardian built regional models. Scotland-focused content lifted dwell time 28% among local users. Sky News optimised live events. Data predicted peaks, boosting concurrent viewers 40% for elections.

Telegraph refined newsletters. Open rates hit 45% after segmenting by interest clusters. Metro used mobile data. Short-form alerts drove 70 million app sessions yearly. These cases processed data from 200 million interactions, yielding format shifts across outlets.

How does data usage evolve in the UK news landscape?

UK agencies adopt privacy-first tech post-GDPR, integrating first-party data with 90% compliance. They scale AI for real-time personalisation, processing 3x more events since 2020.

GDPR mandates consent banners. Agencies collect opt-ins from 75% of visitors.

First-party data replaces cookies. Server-side tracking covers 85% of sessions. AI scales predictions. Neural networks forecast trends from 1 trillion parameters.

Real-time personalisation delivers feeds. Edge computing serves suggestions in 50ms. Evolution tracks regulation. 2024 updates added age verification for 16-24 segments. Future focuses on federated learning. Agencies share models without raw data, enhancing cross-publisher insights.

Link this to results-driven reports in:

Custom Audience Insights Reports Built for News Brands That Want Results