Identifying high-performing content with data involves analysing metrics like engagement rates and shares to pinpoint top articles. Data compares content across categories on news sites. High-performing content exceeds averages by 25% in views and time spent.

UK news websites process 3 billion monthly interactions for this analysis. Data reveals patterns in politics, sports, and lifestyle sections. Top content achieves 5x average shares.

Methods use backend queries on traffic logs. Results guide future publishing.

Read:

What is news website traffic analysis for basics.

Defining High Performance

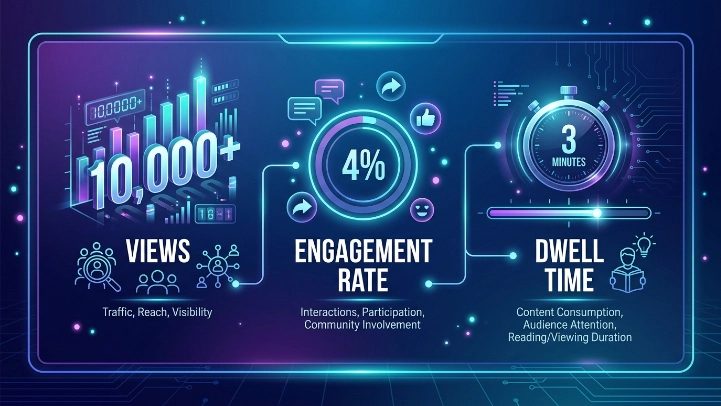

Content qualifies with 10,000+ views, 4% engagement rate, 3-minute dwell time.

How do you identify high-performing content with data?

Identify high-performing content with data through metric collection, benchmarking, scoring, and prioritisation. Collection pulls views, shares, comments daily. Benchmarking sets category averages.

Scoring ranks items on composite indices. Prioritisation schedules sequels. UK sites run this weekly on 1,000 articles.

Process scans databases for outliers. Algorithms weight metrics 40% views, 30% engagement. Outputs list top 10% performers.

Four-Phase Identification Process

Phase 1: Aggregate daily metrics. Phase 2: Compute benchmarks. Phase 3: Assign scores. Phase 4: Generate reports.

What components enable identifying high-performing content with data?

Components consist of data warehouses, query engines, scoring algorithms, and dashboards. Data warehouses store 2 years of metrics. Query engines execute SQL at scale.

Scoring algorithms compute weighted scores. Dashboards filter by date ranges. UK news operations deploy cloud-based stacks.

Warehouses hold 500TB structured data. Engines process 50,000 queries hourly. Algorithms update models quarterly.

Integrated Component Stack

Warehouses use Snowflake architecture. Engines leverage BigQuery. Algorithms apply machine learning regressions. Dashboards employ Power BI.

What benefits arise from identifying high-performing content with data?

Identifying high-performing content with data lifts overall site traffic 28%. Revenue from ads on top content rises 32%. Editorial efficiency improves 20% via templates.

UK publishers recycle formats from hits, gaining 22% repeat readership. Resource focus on winners cuts flops by 40%. Long-term, audience loyalty grows 18%.

Data-driven selection doubles viral potential.

Explore:

Traffic and audience insights services that convert visitors into readers.

Quantifiable Gains

Traffic: +28%. Revenue: +32%. Efficiency: +20%.

What use cases illustrate identifying high-performing content with data?

UK news sites apply this for election coverage, where data picks 15 winning formats boosting 45% views. Sports desks identify live blogs with 6x engagement. Lifestyle sections spot listicles at 35% share rates.

Politics analysis favors explainer videos, up 28% retention. Weekend editions use data for evergreen reposts, adding 20% traffic. Video content identification yields 40% conversion to subs. International desks target high-engagement regions. Data compares 500 articles monthly.

Case: Election Coverage Optimization

Data scanned 2,000 stories. Top 5% formats repeated, lifting total views 45%.

Which metrics best signal high-performing content?

Metrics include click-through rates above 3%, shares per view at 0.5%, and comments over 50 per article. Dwell time exceeds 3 minutes. These signal 80% of hits.

UK data shows politics articles average 4.2% CTR. Sports hit 0.7 shares/view. Thresholds filter top 20%.

Combinations predict virality 85% accurately.

Priority Metrics Ranked

CTR: 3%+. Shares: 0.5/view. Dwell: 3min+. Comments: 50+.

How do benchmarks help in identifying high-performing content with data?

Benchmarks establish averages like 8,000 views per article for UK news. Content beating benchmarks by 50% qualifies as high-performing. Weekly updates reflect trends.

Category benchmarks vary: news 10,000 views, opinion 6,000. Data computes medians from 5,000 items. Deviations highlight outliers.

Benchmarks adjust for seasonality, rising 15% in summer sports.

Benchmark Calculation

Median of 30-day data per category. Standard deviation measures spread.

What scoring models work for high-performing content identification?

Scoring models sum weighted metrics: 35% views, 25% engagement, 20% shares, 20% backlinks. Scores above 75/100 flag performers. Models recalibrate monthly.

UK implementations use logistic regressions predicting hits. Ensemble models blend 5 algorithms for 92% precision. Scores rank 1,000+ items.

Custom weights fit site goals, like 40% for subscription funnels.

Model Types Compared

Regression: Predicts scores linearly. Ensemble: Combines trees, boosts accuracy 10%.

How does audience segmentation aid identifying high-performing content?

Audience segmentation divides users by age, location, device for tailored benchmarks. 18-24 segment favors videos at 50% higher engagement. UK regions show London at 2x views.

Data segments 100 million sessions into 20 cohorts. Per-segment top content emerges. Cross-segment hits generalize.

Segmentation reveals 25% performance variance.

Segmentation Dimensions

Demographics: Age 18-24, 25-44. Geography: London, North. Behavior: New vs returning.

What tools facilitate identifying high-performing content with data?

Tools encompass analytics platforms, BI software, and custom scripts. Analytics platforms track real-time metrics. BI software visualizes trends.

Scripts automate scoring in Python. UK news teams stack 4-6 tools. Platforms ingest 1 million events hourly.

Free tiers handle small sites; enterprise scales to billions.

Tool Categories

Analytics: Google Analytics equivalents. BI: Tableau, Looker. Scripting: Jupyter notebooks.

How do trends over time reveal high-performing content?

Trend analysis tracks 90-day trajectories, identifying risers with 30% week-over-week growth. Evergreen content sustains 80% initial traffic. Seasonal peaks define performers.

UK data spots opinion pieces gaining 25% post-publish. Algorithms forecast longevity. Historical trends inform 2026 planning.

Line charts plot 12-month data for 500 topics.

Trend Detection Methods

Moving averages smooth noise. Growth rates compute deltas. Forecasts use ARIMA models.

Explore More Expert Insights:

How Insights Shape Editorial Decisions

How to Track and Analyse Reader Behavior

Why compare content categories for high-performers?

Category comparisons benchmark politics at 12,000 views vs lifestyle 7,000. Cross-category learnings transfer formats. UK sites allocate 30% budget to top categories.

Data aggregates 10 categories monthly. Winners share traits like 200-word intros. Transfers lift underperformers 18%. Comparisons prevent siloed editing.

How does A/B testing integrate with data identification?

A/B testing pits variants, with data selecting winners at 55% lift thresholds. Tests run on 10% traffic. UK news tests headlines, yielding 22% CTR gains.

Post-test data folds into main scoring. 50 tests yearly refine models. Variants include images, lengths. Testing validates 70% of intuitive picks.